Abstract

We present DIRECT-3D, a diffusion-based 3D generative model for creating high-quality 3D assets (represented by Neural Radiance Fields) from text prompts. Unlike recent 3D generative models that rely on clean and well-aligned 3D data, limiting them to single or few-class generation, our model is directly trained on extensive noisy and unaligned ‘in-the-wild’ 3D assets, mitigating the key challenge (i.e., data scarcity) in large-scale 3D generation. In particular, DIRECT-3D is a tri-plane diffusion model that integrates two innovations: 1) A novel learning framework where noisy data are filtered and aligned automatically during the training process. Specifically, after an initial warm-up phase using a small set of clean data, an iterative optimization is introduced in the diffusion process to explicitly estimate the 3D pose of objects and select beneficial data based on conditional density. 2) An efficient 3D representation that is achieved by disentangling object geometry and color features with two separate conditional diffusion models that are optimized hierarchically. Given a prompt input, our model generates high-quality, high-resolution, realistic, and complex 3D objects with accurate geometric details in seconds. We achieve state-of-the-art performance in both single-class generation and text-to-3D generation. We also demonstrate that DIRECT-3D can serve as a useful 3D geometric prior of objects, for example to alleviate the well-known Janus problem in 2D-lifting methods such as DreamFusion

Method

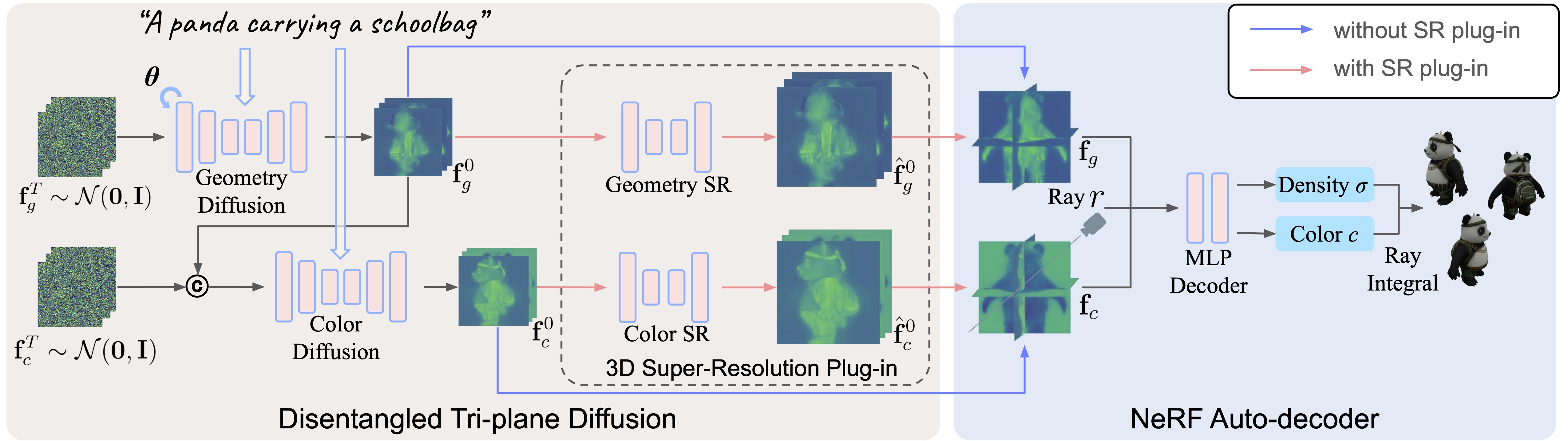

Given a prompt, we generate a NeRF with two modules: The disentangled tri-plane diffusion module uses 2 (or 4 if the super-resolution plug-in is used) diffusion models to generate geometry (fg) and color (fc) tri-plane separately. Then both tri-planes are reshaped and fed into a NeRF auto-decoder to get the final outputs. During training, an iterative optimization process is introduced in the geometry diffusion to explicitly model the pose θ of objects and select beneficial ones, enabling efficient training on noisy ‘in-the-wild’ data. The whole model is end-to-end trainable (with or without 3D Super Resolution plug-in), with only multi-view 2D images as supervision.

Direct Text-to-3D Generation

Improving 2D-lifting Methods with 3D Prior

Bibtex

@inproceedings{liu2024direct,

title={DIRECT-3D: Learning Direct Text-to-3D Generation on Massive Noisy 3D Data},

author={Liu, Qihao and Zhang, Yi and Bai, Song and Kortylewski, Adam and Yuille, Alan},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={6881--6891},

year={2024}

}